Inclusion by design: exploring gender responsive designs in digital welfare is the second of a two-report series. The

first report demonstrated how Western

welfare systems have long reflected the

gendered disadvantages present in society and shone a light on examples of

discriminatory automated decision-making systems (ADMS) managing digital welfare systems in several Western Countries. It concluded by highlighting

gender equality principles for the

digital welfare state. This report builds on those principles. There is still time to address and rectify the gendered harms welfare systems can inflict.

The implementation of

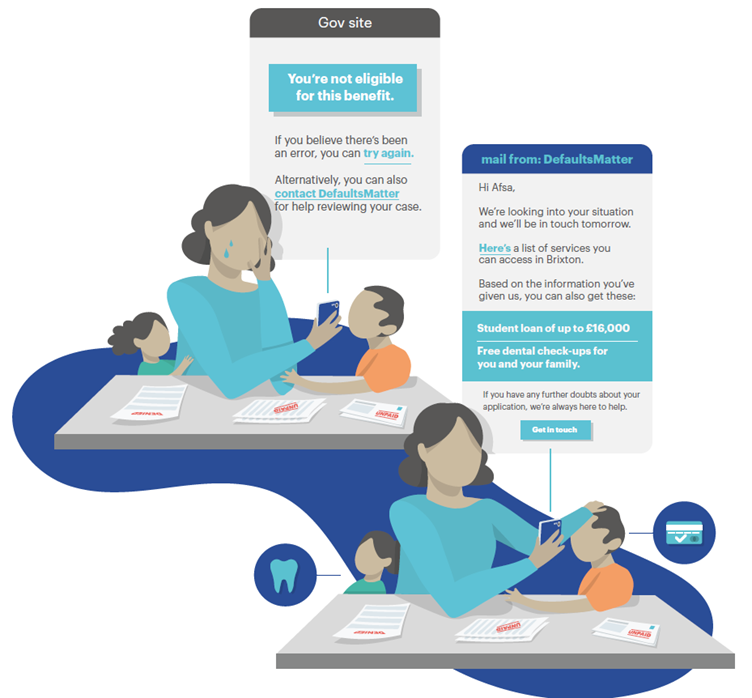

automation has been mostly a two-sided conversation between policymakers and technologists, an approach that does not take into consideration the implications of digitising old ways of working and inherited social structures. We cannot assume that technology is a neutral actor when it is history that has built it. Instead, it is

systems designed to acknowledge the historic biases that preceded them and understand the complex lives of those who seek their services that

lead to a more equitable future.

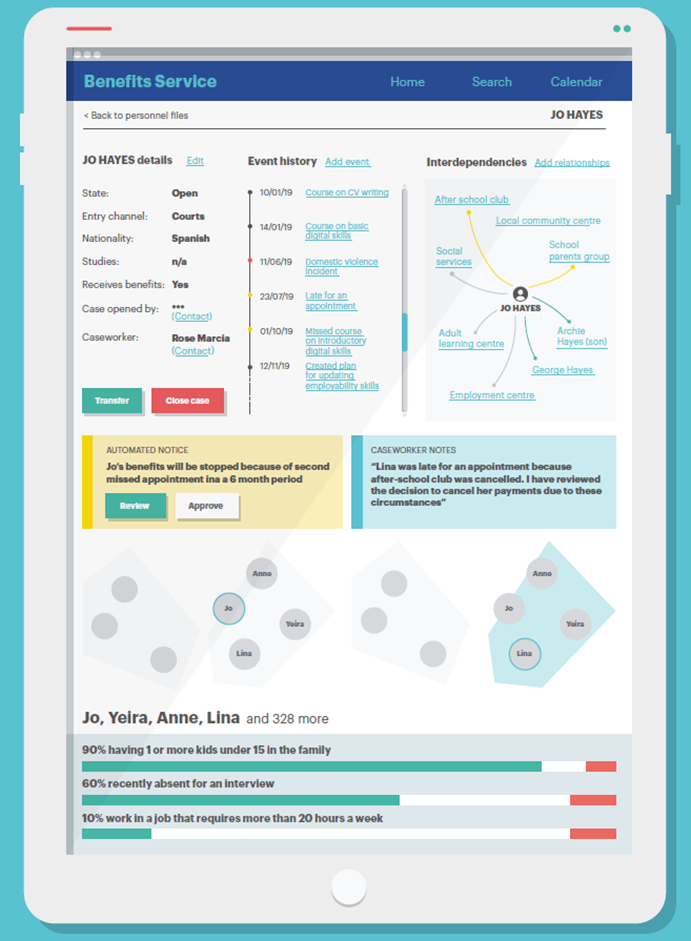

To

design digital welfare systems in this way requires an understanding of the interconnected nature of the social categorisations that the system users will belong to. Relating to gender this could mean many things, including the increased probability of a women being unemployed while also more likely to be engaged in in unpaid labour positions such as caring for children or other dependent family members. If public sectors are going to automate the decision-making processes that manage access to welfare systems, these

ADMS must include different frameworks to address the intersectionality of women users.

In this regard,

this report maps a way forward in which design can play a fundamental role in

envisioning digital welfare services contributing to a more gender-equitable future. Gender responsive design means compiling and using gender-relevant datasets and statistics, incorporating gender analysis and gender impact assessments, and recognising that co-design and human oversight are fundamental to avoiding the automation of errors and inequalities.

When public servants design a service, they have to think they one day might be the very person who needs to access that service. It requires a level of empathy to understand that some public services have become a painful life event for the user. If the state cannot go through the exercise to understand the level of trauma and sensitivity, and furthermore does not apply that empathy to design, it is giving a bad service.

Daniel Abadie - former Digital Secretary of Argentina